Most enterprise AI initiatives fail not because of bad technology, but because teams pick the wrong problems to solve. Here is how to find the right AI agent use cases for businesses.

What is an AI Agent, exactly?

An AI agent is software that can observe a situation, reason about what to do, take action, and adjust its behavior based on what happens next, without a human deciding every step. Unlike a chatbot that waits for a prompt and returns an answer, an agent can chain multiple actions together, use tools like search or databases, and work toward a goal over several steps.

Think of the difference like this: a traditional automation script follows a fixed script. An AI agent follows intent. You tell it what you want to achieve, and it figures out how to get there within defined boundaries.

Key distinction

An AI agent is not just a chatbot with extra features. It is a system that can plan, act, check results, and course correct. That capability is powerful, but it also means the wrong problem choice is far more costly to undo.

Because agents take real actions (sending emails, updating records, calling APIs, generating documents), the stakes for picking the right problem are much higher than for a simple search tool or dashboard.

Why Problem Selection Matters More Than Technology

Enterprise teams often start by asking: “What can AI agents do?” The better question is: “What are the right business problems that can be solved by AI?”

The difference may be very little, but it makes a big difference. Starting from capability leads to solutions in search of problems. Starting from business needs leads to tools that people actually use and that deliver measurable returns.

Most AI projects fail at problem definition, not at model performance.

A Gartner survey found that 30% of enterprise AI pilots are abandoned before reaching production, with the most common reason being that the problem was either too ambiguous, too complex, or not genuinely painful enough to justify the investment. Getting problem selection right before you touch a single line of code is the single highest-leverage thing an enterprise team can do.

That said, not every hard problem is a bad one, and not every easy problem is a good one. The goal is to identify problems where agents provide a meaningful, sustainable advantage over your current approach.

The Five Criteria for a Good AI Agent Use Case

Use these five criteria to evaluate any business problem before committing to it. A problem that meets all five is a strong candidate. A problem that fails two or more is worth reconsidering.

1. It is repetitive and rule-governed

The task follows a predictable pattern, even if the inputs vary. Examples: reviewing a contract for standard clauses, triaging incoming support tickets, and processing expense reports against a policy. The agent does not need to make judgment calls that require deep institutional knowledge each time.

2. It has a clear, measurable goal

You can define what “done” looks like. The agent needs a target it can work toward and a way to know when it has succeeded. Vague objectives like “improve customer satisfaction” are too open-ended. Specific ones like “classify and route 500 daily support tickets to the correct team within 2 minutes” are not.

3. The cost of a mistake is recoverable

Agents make errors. A good candidate problem is one where errors are detectable, reversible, or low-stakes enough that they do not cause significant harm before a human can catch them. Financial fraud decisions or surgical scheduling, for example, have a very different error profile from summarizing meeting notes.

4. It consumes significant human time or creates a bottleneck

The problem is actually painful enough to justify building a solution. If the current process takes one person five minutes a week, an agent will not move the needle. If it takes a team of ten people two days per cycle and delays downstream work, that is a real candidate.

5. The required data and systems are accessible

The agent needs to read information and take action somewhere. If the relevant data is locked in legacy systems with no API access, or if the permissions needed to act on the data do not exist, the problem is technically blocked regardless of how good the model is.

Also Read: 8 Powerful Use Cases of Agentic RAG Transforming Enterprises

How to Score and Rank AI Agent Use Cases For your Business

Once you have a list of business problems that can be solved by AI, score each one across four dimensions.

Impact

Time saved, revenue protected, error rate reduced, headcount freed for higher-value work. Be specific. “Significant savings” is not a score.

Feasibility

Is the data clean? Are APIs accessible? Does the problem have clear success criteria? Can you define a test suite? Feasibility drops fast when any of these is no.

Risk

What happens if the agent produces the wrong output? Rate problems lower when errors are hard to detect, irreversible, or expose the business to regulatory or reputational harm.

Speed to value

A quick win that proves the approach is worth more than a 12-month build that the organization loses faith in before it ships. Favor problems where you can get to a working prototype in 4–8 weeks.

Rank your problem. The ones with high impact, high feasibility, manageable risk, and a short path to value are where you start.

Common mistake

Teams often start with the most visionary use case -the one that gets the executive presentation approved. That problem is usually the hardest, the riskiest, and the slowest to deliver value. Start one or two tiers below the vision, prove the approach, then move up.

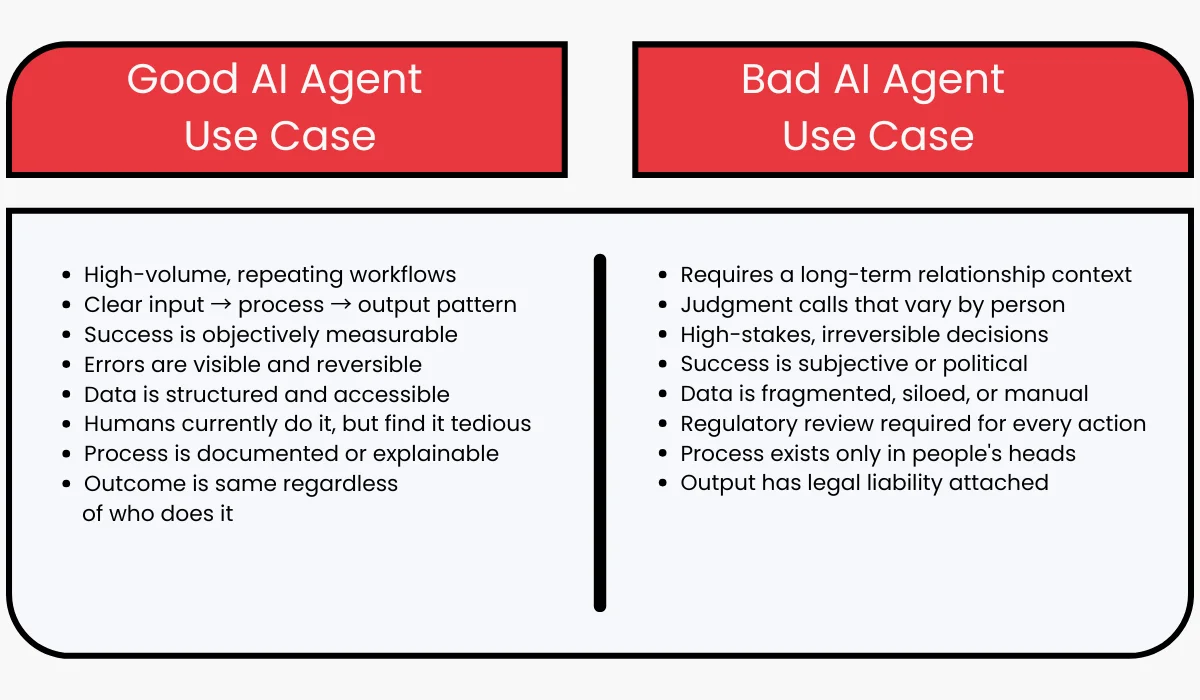

What Makes a Problem Good vs. Gad Git

These two categories are not about problem size; a large, complex problem can still be a good fit if it meets the criteria. This is about the problem’s structure.

Real AI Agent Use Case Examples

These AI agent use cases are not exhaustive, but they illustrate the pattern of problems that consistently deliver in enterprise environments. Notice that every example has a specific, bounded scope, not “automate HR” but “automate the first-pass screening of inbound job applications against a defined criteria checklist.”

-

Contract review and flagging

Review incoming vendor or partner contracts for non-standard clauses, flag deviations from templates, and summarize key terms for legal team sign-off. Humans make the final call; the agent eliminates the initial read-through.

-

Invoice and expense processing

Extract line items from invoices, match to purchase orders, flag discrepancies, and prepare approval packets. Cuts a multi-day manual process to hours, with humans reviewing exceptions only.

-

Support ticket triage and routing

Read incoming support tickets, classify by issue type and urgency, pull relevant account context, draft a suggested response, and route to the right team. Agents handle intake; agents with senior access handle the response queue.

-

Candidate screening summary

For each application, an AI agent for candidate screening compares CV content against the job requirements, produces a structured scorecard, and flags the top candidates for recruiter review. Reduces screening time per role from days to hours.

-

Incident triage and runbook execution

When an alert fires, the agent checks monitoring dashboards, identifies probable cause, runs defined diagnostic steps from a runbook, and escalates with a situation summary, cutting mean time to resolution.

-

Account research and brief generation

Before a sales call, the agent pulls recent news about the prospect, flags relevant product use cases, checks CRM history, and produces a one-page brief. Reps arrive prepared without spending 45 minutes on manual research.

Also Read: Agentic AI for Sales

Where to Look Inside Your Organization

Most enterprise teams do not lack good AI agent solutions; they lack a systematic way to surface them. These four approaches consistently surface the highest-value problems.

- Talk to the people doing the work, not just their managers

Managers see process diagrams. The people doing the work know where the time actually goes. Ask them: “What part of your day feels the most tedious?” and “What do you do over and over that feels like it could be automated?” The answers are usually specific and actionable.

- Look at your highest-volume workflows first

Volume is a multiplier. A task that takes 10 minutes but happens 500 times a day is worth 83 hours of human time. Find your high-frequency processes in operations, customer service, finance, and legal, then evaluate them against the five criteria.

- Audit your queue backlogs

Anywhere work piles up, support queues, approval queues, review queues, is a signal. Backlogs mean demand exceeds human capacity. AI agents can either eliminate the queue or dramatically shorten it. Both are valuable, and backlogs are easy to quantify.

- Find the work that blocks other work

Some tasks are bottlenecks for downstream processes. A contract review that takes two weeks delays procurement. A slow invoice processing cycle delays vendor payment. Automating the bottleneck creates compound value, the primary task gets faster, and everything downstream accelerates too.

Red Flags That Signal a Bad AI Agent Use Case

These patterns show up early in AI agent projects that eventually get cancelled or restarted. Catch them before you invest.

- No one can define what success looks like. If the team cannot agree on how they will know the agent is doing a good job, they cannot build one. “Better” is not a success metric. Define it before you start.

- The process exists only in someone’s head. If the task depends on tribal knowledge, relationships, or institutional memory that has never been written down, the agent has nothing to learn from. Document the process first. Often, this exercise alone reveals that the process is too inconsistent to automate.

- Every case is an exception. Some processes look structured on paper but are actually judgment-heavy in practice. If your team spends most of its time in “it depends” territory, the pattern an agent could learn may not exist in a usable form.

- The project is driven by technology interest, not business pain. Projects that start from technology enthusiasm without a clear, painful business need almost always stall when the team realizes the work required versus the business value delivered.

- There is no plan for human oversight of AI agents that operate without any human review. If your plan does not include checkpoints, escalation paths, or review loops for edge cases, it is not a plan yet.

The Golden Rule

Do not build a general-purpose agent. Build a specific AI agent for a specific problem. You can expand the scope after you have proven it works. Agents that try to do everything tend to do nothing reliably.

The Right Problem is Half the Solution

Enterprise AI agents are not a technology, but they are a business strategy. The teams that succeed are the ones that spend as much time choosing the right problem as they spend building the solution. Use the criteria, do the discovery work, validate before you commit resources, and start narrow. The vision can wait. The first proof of value cannot.

FAQs

What is the difference between an AI agent and traditional automation like RPA?

RPA follows a fixed set of rules and breaks when the interface changes. AI agents can understand context, handle input variations, and adapt when the situation is not exactly as scripted. RPA is good for pixel-perfect, deterministic tasks. AI agents handle the messier middle ground where inputs vary, but the goal stays consistent.

How do I know if a business problem is too complex for an AI agent?

A problem is likely too complex if it requires integrating context across years of relationship history, involves legal or ethical judgment that could cause significant harm if wrong, or cannot be expressed as a goal with measurable success criteria. Start simpler. You can always increase complexity once you have a working foundation.

Do we need a large dataset to train an AI agent?

No. Most enterprise AI agents use general-purpose foundation models (like Claude, GPT-4, or similar) combined with your specific context, data, and instructions. You do not train from scratch. You set it up, guide it, and connect it to your systems. This dramatically lowers the barrier to entry compared to traditional ML.

What does a successful AI agent pilot look like?

A successful pilot handles a specific, scoped task reliably for at least four to six weeks in a real environment. It has defined metrics (accuracy, time saved, error rate) and a clear comparison against the baseline. It involves end users from day one, not just in the demo. And it produces an honest assessment of what worked, what did not, and what would be needed to scale.

How do we get organizational buy-in for an AI agent project?

Frame it in terms of the business problem, not the technology. Quantify the cost of the current process, time, headcount, error rate, and downstream delays. Show how the agent reduces that cost and what the team will be able to do with the time recovered. Start with a department that has a credible pain point and a willing champion, then expand from the evidence of that first win.

What role should humans play once an AI agent is deployed?

Humans should stay in the loop for high-stakes decisions, edge cases, and periodic quality review. A well-designed agent reduces human effort; it does not eliminate human judgment. Design your agent with clear escalation triggers: when confidence is low, the situation is unusual, or the downstream consequences are significant, the agent should hand off to a person rather than guess.

How long does it take to build and deploy an enterprise AI agent?

A scoped, well-defined agent can reach a working prototype in four to eight weeks. Getting to production-grade reliability with monitoring, error handling, security review, and integration typically takes three to six months for a first deployment. Projects that take longer usually have scope, data access, or stakeholder alignment problems that the timeline reveals but does not cause.

Which departments are the best starting point for AI agents in a large enterprise?

Operations, customer support, finance, and legal tend to have the highest concentration of repetitive, high-volume processes with clear success criteria. IT operations are also a strong starting point, especially for incident response and documentation. Sales enablement (account research, content generation, CRM hygiene) is increasingly common as a first deployment area because the feedback loop is fast and the output is easy to evaluate.