Two terms that are often used interchangeably in machine learning include “machine learning algorithms” and “machine learning models.” Knowing the difference between these terms is essential, especially for those offering machine learning development services.

It will also help you to make the most of machine learning to meet your clients’ requirements. Imagine the concept this way: algorithms are data scientists’ tools to sort through the data and find patterns. Models are the result of these algorithms and serve as the engines driving forecasts and making decisions based on the latest data.

Although this may sound simple, there’s a lot more to the tale. Let’s explore the reasons that make models and algorithms distinct but interdependent parts of machine learning.

What is a Machine Learning Algorithm?

An algorithm is a group of rules or instructions, aiming to learn patterns from the given input or data. Various ML algorithms are based on the purpose of the machine-learning project, the method of feeding data to the algorithms, and what you intend for an algorithm to “learn.”

They are categorized in five broad categories:

1. Supervised Learning

In supervised learning, scientists supply the algorithm with labels on the data, which include inputs and outputs. The algorithm is trained using this data and can precisely translate inputs into outputs.

In supervised learning, algorithms create predictions and then adjust according to mistakes until it can consistently give highly accurate results. This type of training is typically used for tasks like regression and classification, aiming to predict an outcome based on the input characteristics.

2. Unsupervised Learning

Unsupervised learning is the process of training algorithms using data that lack explicit labels. In this case, the data supplied to the algorithm does not have specified outputs. The algorithm’s task is to detect patterns and relationships in the information.

This approach is especially beneficial for clustering, in which data points are clustered by similarity, or for reducing dimensionality, in which the objective is to reduce data size while keeping its key characteristics.

3. Semi-Supervised Learning

Semi-supervised learning is a combination of both unsupervised and supervised learning. It employs a tiny amount of labeled data with more unlabeled data. The algorithm begins by learning from the labeled data and uses this information to label the data that is not labeled.

This approach is particularly beneficial when acquiring labeled data is costly or takes a long time. It also allows the algorithm to draw on the abundance of unlabeled data to enhance its performance.

4. Reinforcement Learning

Reinforcement learning is training an algorithm that makes an order of choices by rewarding it for positive actions and penalizing it for unsuitable ones. The system is based on trial and error and can teach itself to increase the value of a reward-based signal over time.

The algorithm is in contact with the environment and alters its actions to produce the most efficient result. This kind of learning is often used when decisions must be made sequentially, like in-game playing, robotics, and autonomous automobiles.

5. Self-Supervised Learning

Self-supervised learning (SSL) is a new and developing method to bridge the divide between unsupervised and supervised learning. In this method, the machine learning algorithm creates its labels from input data and uses them to understand and make predictions about specific data elements. This technique is especially effective in the natural processing of language (NLP) and computer vision, where the algorithm can determine the parts of data based on other aspects, increasing its comprehension and ability to generalize.

Types of Machine Learning Algorithms

.webp)

Simply put, a machine-learning algorithm is a process that lets computers learn and predict from the data. Instead of telling the computer what it should do, we provide it with enormous information and let it find patterns, relationships, and insights.

You must know about these types of machine-learning algorithms.

1. Linear Regression

Linear Regression can be described as a machine-learning technique for forecasting and predicting values within a constant range, such as sales figures or house prices. It’s a method developed from statistics. It is used to establish a relation between an input (X) and an output parameter (Y), which can be expressed as straight lines.

In simple words, linear regression uses an array of data points with known output and input values and locates the most appropriate line for the data points. The line, referred to as the “regression line,” serves as a prediction model. With this model, one can forecast or estimate the outcome value (Y) for an amount of data (X).

Linear Regression is used primarily to model predictively, not for categorization. It helps to comprehend what changes in the input variable impact an output parameter. By studying the slope and intercept of the regression line, we will better understand the relation between the variables and formulate predictions based on this knowledge.

2. Logistic Regression

Logistic regression, sometimes called “logit regression,” is a supervised learning algorithm primarily used to solve binary classification problems. It is typically used to determine whether the input belongs to an individual class, like deciding whether an image belongs to a particular class.

Logistic regression can predict whether an input could be classified into a single primary or multiple classes. However, in reality, it is typically employed to classify outputs into two classes: primary and non-primary.

For this purpose, logistic regression sets the threshold or boundary that allows the determination of binary classification. For instance, output values between zero and 0.49 can be classified in one category, while values that fall between 0.50 and 1.00 are classified in the second category.

Therefore, the term “logistic regression” is used to categorize binary data instead of predictive modeling. It allows us to assign data input into one or two categories according to the probability estimate as well as an established threshold.

This makes logistic regression a valuable tool for performing tasks like image recognition, spam email detection, or medical diagnosis when we have to categorize information into distinct categories.

3. Naive Bayes

Naive Bayes can be described as a collection of supervised learning algorithms used to develop predictive models for multi-classification or binary tasks. It is founded on Bayes Theorem, and works with conditional probabilities that determine the probability of a class based on the factors that are combined while inferring the independence of these factors.

Let’s look at a program that recognizes plants using the Naive Bayes method. The algorithm considers particular factors like perceived size, color, and shape to classify pictures of plants. While each aspect is considered separately, the algorithm can combine these factors to evaluate the likelihood that an object is a plant of a specific species.

Naive Bayes is based on the assumption of independence between the elements, which makes calculations and allows the algorithm to work in large databases efficiently.

It is ideal for tasks such as document classification, filters for spam, sentiment analysis, and many other areas where each factor can be assessed separately but still help to classify the entire dataset.

4. Decision Tree

The decision tree is a supervised algorithm for classifying and predicting modeling tasks. It’s like a flowchart beginning with a node, which asks a particular inquiry regarding the data.

Based on the response provided, the data is then directed across various branches to internal nodes that ask additional questions and direct the data to branches that follow. The process continues until the data reaches a final node, which is also referred to as a leaf, which is the point at which no branching takes place.

The Decision Tree algorithm is a favorite in machine learning because it can quickly deal with large amounts of data. The algorithm’s structure allows it to comprehend and understand the process of making decisions. Asking a series of questions and following the suitable branches of the decision tree will enable us to predict or classify outcomes based on the data’s features.

This ease of use and apprehensibility make decision trees useful in machine learning development, particularly when dealing with complex data.

5. Random Forest

Random forest algorithms are an array of decision trees utilized to classify and predict predictive models. Instead of relying on one decision tree, a random forest blends the results of multiple decision trees to produce more precise predictions.

In a random forest, algorithmic decision trees (sometimes many hundreds or perhaps thousands) are individually developed with different random samples taken from the training data. This method of sampling is known as “bagging.” Every decision tree is trained independently based on the random sample.

After being trained, the random forest uses the same information and feeds it into every decision tree. Each tree generates an estimate, which is then compared to the random forest. The random forest tallies the results. The most popular prediction of all decision trees is selected for the prediction of the dataset.

Random forests solve a common issue, “overfitting,” which can occur when a decision tree is paired with a different one. Overfitting happens when a tree is too tightly aligned with its data from training and becomes less precise when presented with new data.

6. K-Nearest Neighbor (KNN)

K-nearest neighbor (KNN) is a machine learning algorithm that classifies and predicts modeling tasks. The term “K-nearest neighbor” reflects the algorithm’s strategy of separating an output based on its proximity to other points on graphs.

Let’s say we have a data set with labels on the points, some marked blue while others marked red. If we are trying to categorize the new data point, KNN looks at its closest neighbors in the graph.

Its “K” in KNN refers to the number of nearest neighbors to be considered. For instance, if K’s value is five, the algorithm will look at the five closest points to the data point.

Based on the bulk of labeling in the K closest neighbors, the algorithm assigns a class to the newly discovered data point. For example, if the majority of the neighbors closest to you are blue-colored points, the algorithm categorizes the new data point as being part of the blue group.

Furthermore, KNN can also be employed to perform prediction tasks. Instead of determining a classification name, KNN can estimate the value of an unidentified data point using the median or average of its nearest K neighbors.

7. K-Means

The K-means algorithm is an unsupervised one widely used to cluster data and for pattern recognition tasks. It is designed to organize data points according to their proximity to each other. Like K-nearest neighbors (KNN), K-means Clustering uses the concept of proximity to find data patterns.

Each cluster is identified by a centroid, which is the real or imaginary central point of the cluster. K-means can be useful for huge data sets, particularly clusters; however, it isn’t always able to handle outliers.

Clustering algorithms are exceptionally efficient for large-scale datasets and provide insight into the fundamental structure of data by grouping like points. They are useful in a variety of areas, such as customer segmentation, imaging compression, and anomaly detection.

8. Support Vector Machine (SVM)

The support vector machine (SVM) is a managed learning algorithm widely employed for classification and prescriptive modeling. It is popular because it is highly reliable and effective even with only a tiny amount of data. SVM algorithms function by forming a decision boundary known as the “hyperplane.” In two-dimensional space, the hyperplane resembles a line that divides two labeled data sets.

SVM aims to identify the most efficient decision boundary that maximizes the difference between the two sets of data labeled. It is looking for the biggest gap or the largest space between the two classes. Any new data item located on either side of this boundary is classified according to the labels of the data set used to train.

It’s important to know that hyperplanes may take on different shapes when plotted in 3-D space, which allows SVM to deal with more complicated patterns and relationships within the data.

9. Apriori

Apriori is an unsupervised learning algorithm used to predict models, especially in the field of mining association rules.

The Apriori algorithm was developed in early 1990 to determine the rules of association between sets of items. It is often employed for pattern recognition and forecasting tasks, like determining the likelihood of a buyer purchasing the same item after purchasing another.

The Apriori algorithm is based on analyzing the transactional data that is stored in a relational database. It finds items with frequent usage during transactions. These item sets can then be used to create association rules.

For instance, if a customer usually purchases products A and B simultaneously, an association rule could be developed to indicate that buying A will increase the probability of purchasing B.

A machine learning development company can use this algorithm for developing applications for the eCommerce industry where identifying customer behavior and purchasing patterns is crucial to increase sales and customer experience.

10. Gradient Boosting

Gradient-boosting algorithms use an ensemble approach, resulting in “weak” models that are continually improved until they form a robust predictive model. The iteration process gradually decreases the mistakes made by the models, leading to the creation of a precise and optimal final model.

The algorithm begins with a basic, insecure model, which could be based on fundamental assumptions, like dividing data according to the degree to which it falls in the middle or above the average. This model can serve as a base.

Each time the algorithm builds a new model that is focused on repairing the errors made by previous models. It discovers patterns or relationships that earlier models could not grasp and integrates them into the model.

Gradient boost is efficient for handling complex problems and large data sets. It can capture complex relationships and patterns that a single model might overlook. Combining the results of several models, the gradient boost creates a highly accurate predictive model.

What is a Machine Learning Model?

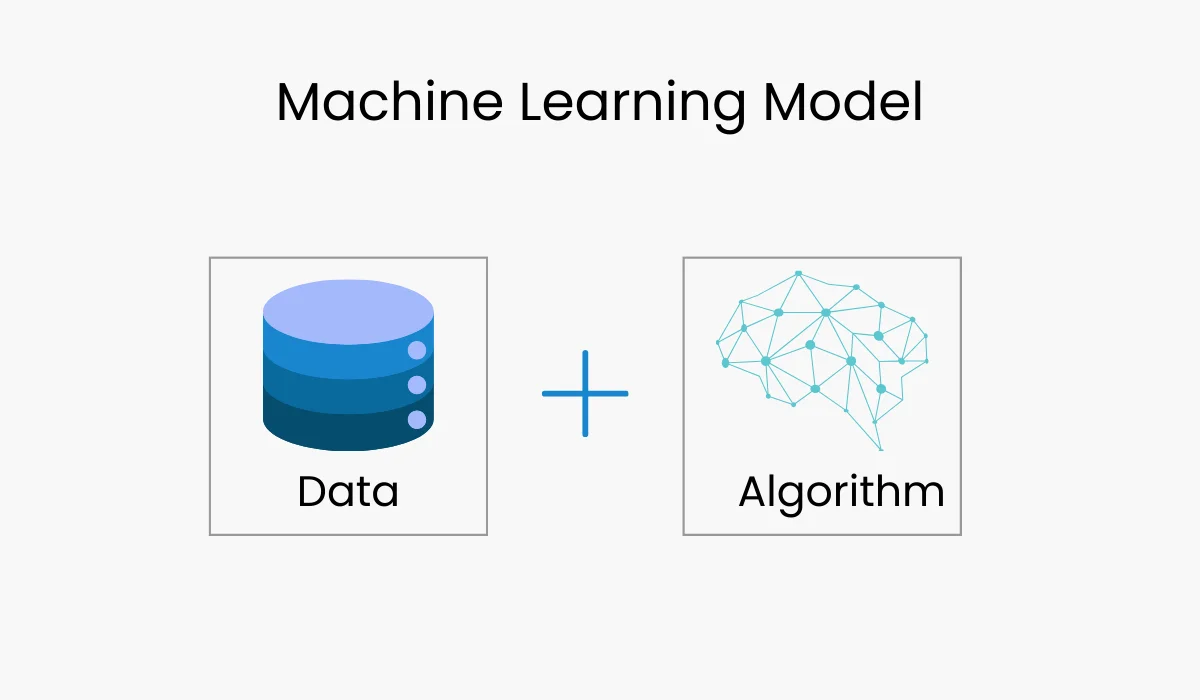

A machine learning model is the outcome of running an algorithm over the data using any of the above types of algorithms.

After model training, you can apply it to create new predictions based on the data or other identical data sets. The model can make predictions with a certain degree of accuracy and confidence based on the degree to which the algorithm was trained.

How are Machine Learning Models Created?

Three primary elements are involved in machine learning modeling.

Data Preparation and Collection: The first stage involves collecting a labeled array of data. The data is gathered from many sources, such as sensors, surveys, and databases.

Feature Engineering: After the data has been gathered and analyzed, it must be processed and converted into a format the machine learning algorithms can read. This is known as feature engineering.

Model Training: The last step is instructing the machine-learning algorithm on the data. This is done by feeding the algorithm with the data and letting it learn the patterns and connections between the elements.

ML Algorithm vs Machine Learning Modeling

Once you’ve mastered the basics of models and algorithms, it’s easy to understand how they interact to develop machine learning solutions. This is a concise overview of their distinctions and interactions.

Definition and Function

- Algorithm: Machine-learning algorithms are a step-by-step process (or set of guidelines) used to process data to discover patterns and connections. They are the methods data scientists use to study data and create understanding.

- Model: Models are the product of an algorithm trained on data. They are the result of learning patterns and are used to predict or make choices based on fresh, unknown data.

Role in Machine Learning

- Algorithm: The primary role of an algorithm is to “train” a model. It processes the training data, detects patterns, and alters parameters to build a model that can predict outcomes or categorize the data points.

- Model: It is the final product of a machine learning process. It embodies the data gleaned from it and is used to create predictions or classifications in the event of new data.

Persistence of Data

- Algorithm: Most algorithms don’t save data once the model has been created. They’re simply tools used to construct the model. After the model has been constructed, the algorithm’s work is complete.

- Model: Different models deal with data differently. Certain models, like the k-nearest neighbors (KNN), keep the entire dataset and employ it for prediction. Other models, such as linear regression, save only the parameters learned and then discard any original information.

Purpose and Focus

- Algorithms: When choosing an algorithm, focus on the training method used and the kind of model you want to create. Different algorithms can be adapted to various types of issues (e.g., clustering, classification, regression).

- Model: The model is a result we are concerned about in real-world applications. It’s what we use to predict outcomes or automate the process of making decisions in real-world situations.

Choosing the Right Tool

- Algorithm: Selecting the appropriate algorithm is vital since it directly affects the efficiency of your model’s performance. For instance, if the problem is predicting constant values, algorithms such as those based on linear regression or even decision trees may be suitable.

- Model: When the best algorithm for your needs is chosen and implemented in the model, it is then built. The effectiveness of the model is dependent on the extent to which the selected algorithm has been able to gain information and create precise predictions.

In essence, although an algorithm acts as the engine driving the design of the model, the actual product we use to perform real-time tasks is the algorithm. The most important thing is to choose the correct algorithm so that the model works well.

Examples of ML Models

Here are a few examples of models based on Machine Learning that fall into different categories:

1. Supervised Learning Models

Linear Regression: This statistical technique describes how two variables interact. It is often employed to forecast an ongoing numerical output, like the cost of a house, based on its size, location, and other features.

Logistic Regression: This statistical method is employed to determine a binary categorical outcome, for example, the likelihood that a customer will be churning. It is often used in task classification.

Decision Tree: A tree-like structure that allows users to make choices using a series of questions. It is typically used to perform analysis and classification tasks.

Support Vector Machine (SVM): This classification algorithm determines the optimal hyperplane to divide two data classes. It is typically used for situations where the data isn’t linearly separable.

Naive Bayes: Probabilistic classification algorithm built on Bayes theorem. It is typically employed for tasks involving text classification, like spam filtering.

K-Nearest Neighbors (KNN): A classification algorithm that categorizes data points according to their similarities to the other information points. It is typically used for jobs where the data is not separable in linear terms.

2. Unsupervised Learning Models

Principal Component Analysis (PCA): This dimensionality reduction algorithm reduces the number of features in the data. It is typically used in tasks such as feature engineering and data preprocessing.

Hierarchical Clustering: It is an algorithm for constructing an orderly cluster made up of information points. It is often employed for tasks like image segmentation and anomaly detection.

Anomaly Detection: This is a process employed to find information points that are anomalies or differ from the normal. It is often used for purposes such as detecting fraud and network intruders.

3. Reinforcement Learning Models

Q-learning: Q-learning is a reinforcement learning algorithm employed to develop a strategy for determining the best actions to take in the environment. It is typically used for tasks like robotics or games.

Deep Q-networks (DQNs): A DQN employs a reinforcement-learning algorithm based on deep Learning. It is typically used for tasks that require massive state spaces.

Policy Gradient: This is a form of reinforcement learning algorithm used to teach a system to select actions within an environment. It is typically used for jobs that require constant state space.

Actor-Critic: This is a reinforcement algorithm that blends two separate neural networks, actors and critics. The actor decides on actions, while the critic helps judge them. It is typically used for jobs that require massive state areas.

Real-World Examples of ML in Action

Machine learning (ML) applications are gradually becoming common in businesses, and their usage will increase in the coming years. At present, these sectors are using machine learning development services.

1. Health

Machine Learning is making essential contributions to the healthcare sector. The algorithms help in the analysis of medical images, detecting disease outbreaks, and diagnosis of various ailments. The predictive models based on ML can help determine patients at risk of developing certain illnesses by analyzing their health history and lifestyle.

2. Finance

In the field of finance, ML models help with fraud detection, algorithmic trading, and credit scores. Fraud detection models can detect patterns in transaction data to detect abnormal behavior and flag fraud. Algorithmic trading also relies on sophisticated ML models to make instantaneous decisions on financial markets.

3. Retail

E-commerce platforms depend heavily on Machine Learning for personalized recommendations, demand forecasting, and customer service. Recommendation systems analyze users’ behavior to recommend items that match their personal preferences.

AI models for inventory management are used by manufacturers and eCommerce businesses to make sure that inventories have sufficient stock to meet customers’ requirements.

4. Autonomous Automobiles

The auto industry now incorporates machine learning into developing autonomous vehicles. These vehicles use cameras and sensors to collect real-time information. ML algorithms analyze the data to determine the best route for the navigation system, obstacle avoidance, and traffic interaction.

These are only some of the numerous ways ML is employed across different industries to make business operations smoother and customers’ experiences more enjoyable. As ML grows, we are likely to witness more creative and revolutionary applications in the coming years.

Challenges and Considerations in Machine Learning

.webp)

Machine learning continues to advance and become essential to technology and business. Understanding the issues and concerns involved in creating and deploying models is vital. Although machine learning models provide significant insights and automatization, their performance depends on how the models are created, maintained, and optimized.

In this section, we’ll look at three key problems: overfitting and underfitting, hyperparameter tuning, and the trade-off between bias variance and how they affect machine learning algorithms and models.

1. Overfitting and Underfitting: Striking the Right Balance

One of the most frequent problems regarding machine learning concerns balancing underfitting and overfitting. Both of these problems directly affect the performance of models that use machine learning and are affected by the algorithm chosen and the quantity of data used for training.

- Overfitting

It is when a model has learned too well the data it was trained on about its noise and outliers. This means the model can perform exceptionally well on the data it was trained on but does not generalize to new and untested data. Overfitting models are usually too complicated and capture the intricate nature of the data used in training in a way that is unnecessary.

For instance, a tree with too many branches may be able to cover every data point of the training set but do poorly in a test. Overfitting usually indicates that it is customized to the particulars of the data used for training and cannot be generalized.

- Underfitting

It is the reverse of overfitting. It happens when a system is simplistic and fails to recognize the underlying patterns in the data. Models that aren’t well-fit are often unable to make accurate predictions since they’ve not learned enough about the information.

For instance, applying an equational model to model data with nonlinear relationships will cause underfitting. The model won’t be able to comprehend the complex nature of the data, leading to poor performance both on the test and training set.

Scientists should choose the appropriate algorithm and model complexity to prevent underfitting and overfitting the data. Regularization methods, like the L1 (Lasso) or L2 (Ridge) regularization, are commonly employed to avoid overfitting by adding penalties for coefficients with high coefficients.

Cross-validation methods can help in selecting models that can be generalized well by evaluating the performance of different subsets of data.

2. Hyperparameter Tuning: The Key to Model Optimization

Hyperparameters refer to the settings or configurations that determine the behavior of a machine-learning algorithm before its training based on the input data. Contrary to the parameters derived from the data used to train, they are set before the beginning of the learning process.

They can have a significant impact on the effectiveness of the algorithm. Examples of hyperparameters include the learning rate of neural networks, how many trees make up a random forest, and the penalty term used in regularization.

- Significance of Hyperparameter Tuning

The process of tuning hyperparameters is the method of optimizing these settings to get the highest possible performance of the model. The correct combination of hyperparameters could result in an algorithm performing well with training data and adapting well to new datasets.

For example, in the case of a support-vector machine (SVM), the selection of the kernel and the regularization parameter can significantly impact the ability of the model to classify data points correctly.

- Techniques for Tuning Hyperparameters

Common techniques for tuning hyperparameters include Grid search, Random Search, and more sophisticated methods such as Bayesian optimization. Grid search requires a thorough search through hyperparameters to determine the optimal combination.

Random search is the opposite; it randomly chooses combinations of tested hyperparameters. Bayesian optimization employs a probability-based approach, constructing an algorithm to determine which combinations of hyperparameters will perform best and focusing the search on specific areas.

Tuning hyperparameters is time-consuming and expensive, particularly for models with multiple hyperparameters. However, investing time into this process is essential to getting the best performance from your model.

Bias and Variance: Managing the Trade-Off

A further fundamental idea essential to machine learning is the balance between variance and bias, which directly affects the generalization capacity of models.

- Bias

Bias is the errors caused by approximating an actual problem that may be complicated and an unrefined model. Models with high bias are usually too simplistic and rely on strong assumptions regarding the data. In the end, they tend to overfit the data, which can lead to systematic mistakes in predictions. For instance, a linear regression model could have a high degree of bias if the real relationship between variables is not linear.

- Variance

It refers to tiny variations in the training data. Models with high variance tend to be extremely complex and can fit the training data extremely closely regarding noise and outliers. This can lead to overfitting, which is when the model performs excellently on training data but not so well on new data. Decision trees and k-nearest neighbor models are two examples of models that may be highly variable if they are not regularly regularized.

- Bias-Variance Trade-off

The trade-off between bias and variance is an important issue regarding machine learning. A model with a high variance and low bias will not be able to predict the data accurately, and a model with a moderate bias and a high variance is likely to overfit the information.

The aim is to strike the proper equilibrium, that is, a model with low enough bias to accurately identify the fundamental patterns of the data and has low enough variance to generalize with newer data easily.

To control the trade-off between bias and variance, data scientists may employ methods such as cross-validation regularization and methods for ensembles, such as ensemble techniques like bagging and boosting to blend different models to reduce variability while minimizing bias. Regularization methods add a penalty for more complicated models and help to reduce the variance but not significantly increase bias.

Practical Implications for Machine Learning Development

Understanding the challenges is essential in developing efficient machine-learning models that can be utilized in real-world scenarios. The selection of algorithms, the complexity of models, hyperparameters, and the management of variance and bias all play a major part in the overall performance of a machine learning project.

- Modell Evaluation and Validation

Regularly evaluating models with techniques such as cross-validation assures that the model chosen is well-constructed and can be generalized. This is particularly important for enterprise AI development, where models are frequently employed to make important business decisions.

- Continuous Monitoring and Updates

Even after deployment, machine-learning models require constant monitoring to ensure they function optimally as new data becomes available. Retraining the model with current data is a way to ensure its relevance and accuracy.

- Ethics Considerations

As models become more complex, it is important to be aware of the ethical implications of machine learning, which include issues of accountability, fairness, and transparency. Ensuring that models are free of bias and capable of being interpreted can help create trust among users and other stakeholders.

The Future of Machine Learning

While technology continues to develop, Machine Learning (ML) is expected to progress in exciting ways. ML is being utilized across a range of fields, and its usage will only increase in the near future.

Researchers are continually developing new and more efficient ML algorithms. These algorithms can analyze complex data, create precise predictions, and run on more robust hardware.

As ML models become more complicated and complex, explaining and comprehending their decisions becomes crucial. This can help build confidence in ML systems and ensure they are employed ethically and responsibly.

Conclusion

Machine learning is an incredible technology for improving processes, products, and research. However, machines rarely make decisions independently or provide predictions, which is an obstacle to implementing machine learning. Models and algorithms work together to make this happen and for this, it is important to understand each one better.

Machine learning models are built on algorithms that take inputs and generate outputs. They can be described as programs that detect patterns that are not obvious or make decisions based on previous information.

On the other hand, algorithms are the engine behind machine learning model development. They inform computers what to do and what to accomplish in a precise, simple manner.